The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

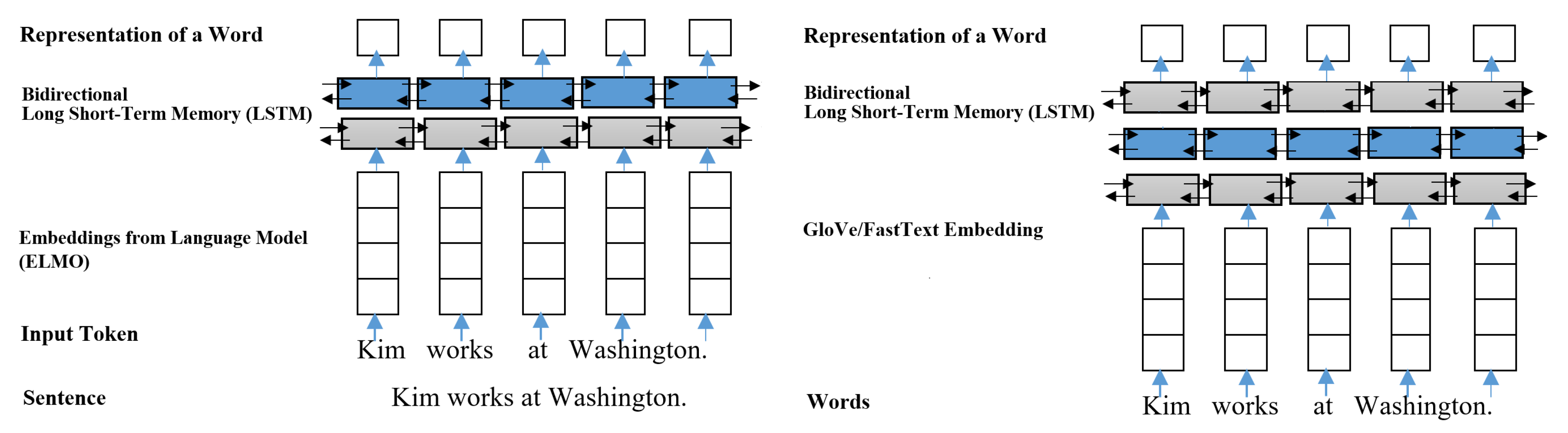

![PDF] How Contextual are Contextualized Word Representations? Comparing the Geometry of BERT, ELMo, and GPT-2 Embeddings | Semantic Scholar PDF] How Contextual are Contextualized Word Representations? Comparing the Geometry of BERT, ELMo, and GPT-2 Embeddings | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/9d7902e834d5d1d35179962c7a5b9d16623b0d39/5-Figure1-1.png)

PDF] How Contextual are Contextualized Word Representations? Comparing the Geometry of BERT, ELMo, and GPT-2 Embeddings | Semantic Scholar

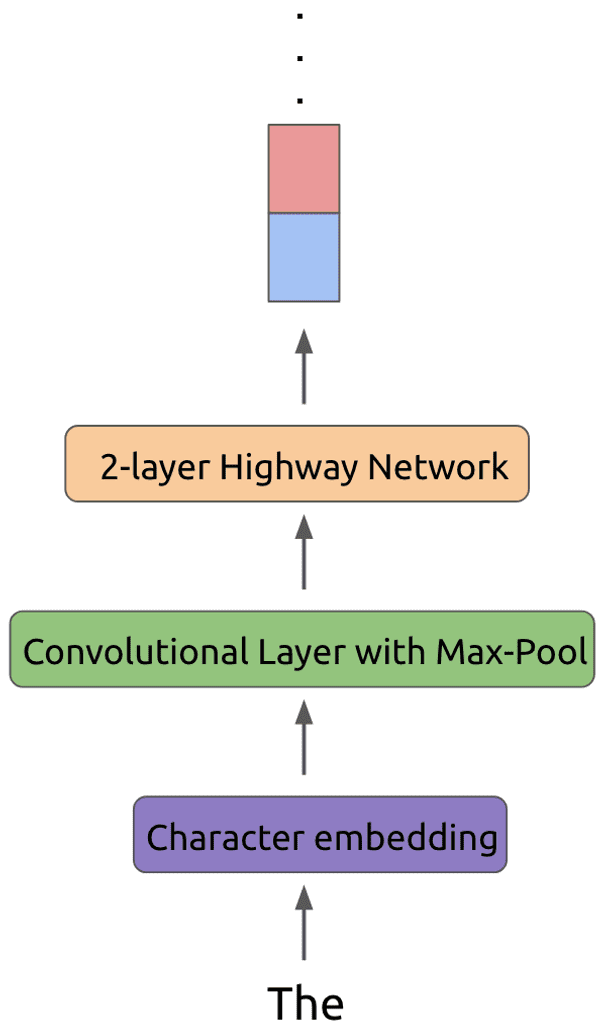

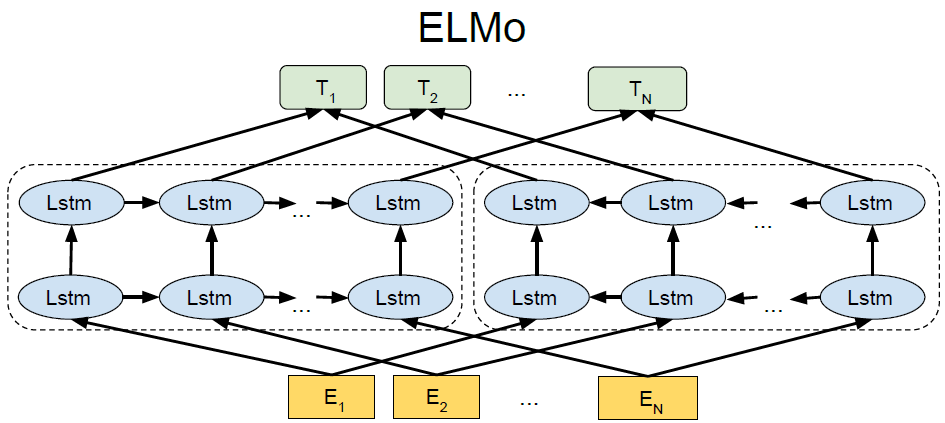

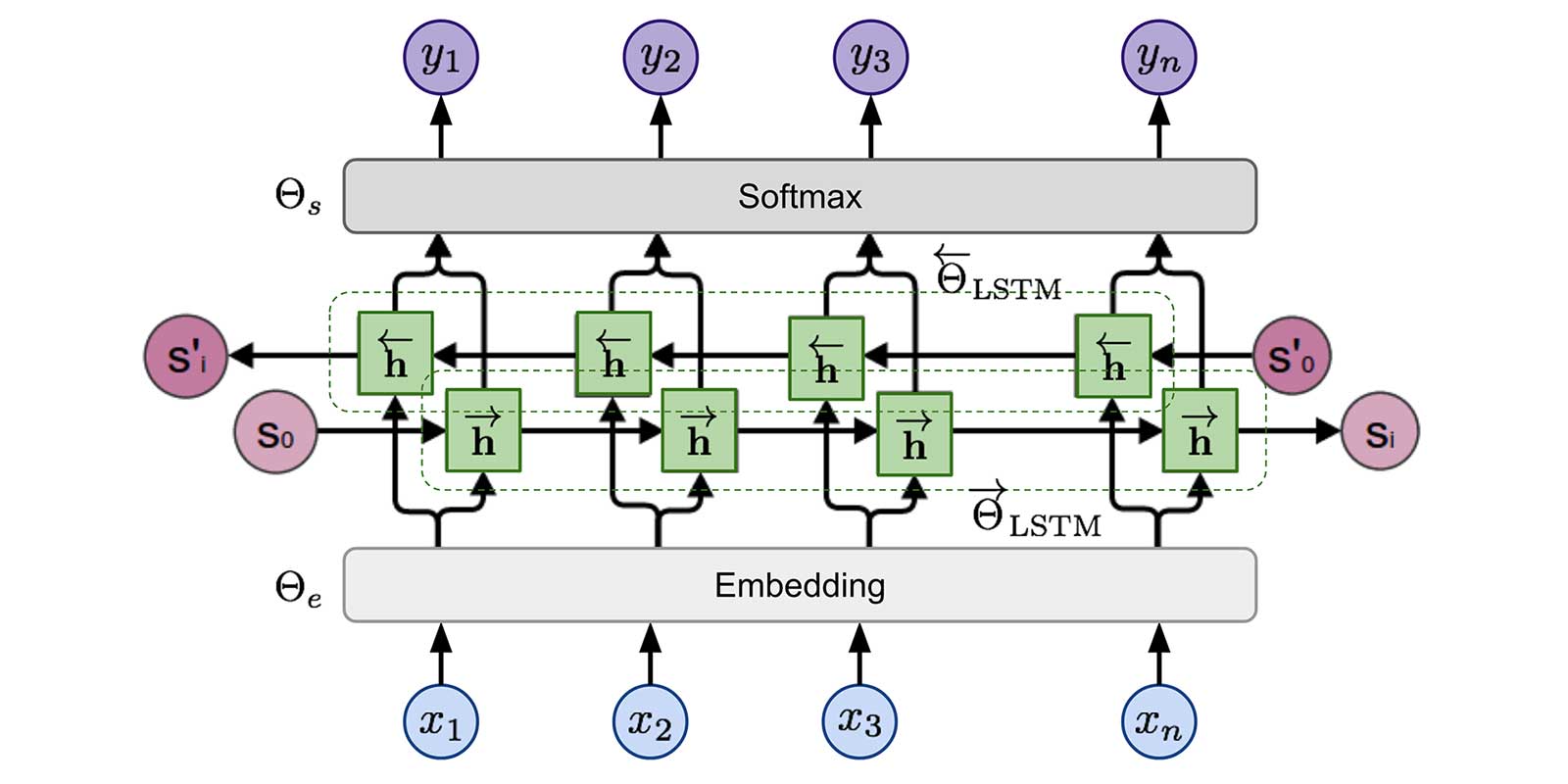

Neural network architecture of ELMo. Char-CNN stands for character CNN | Download Scientific Diagram

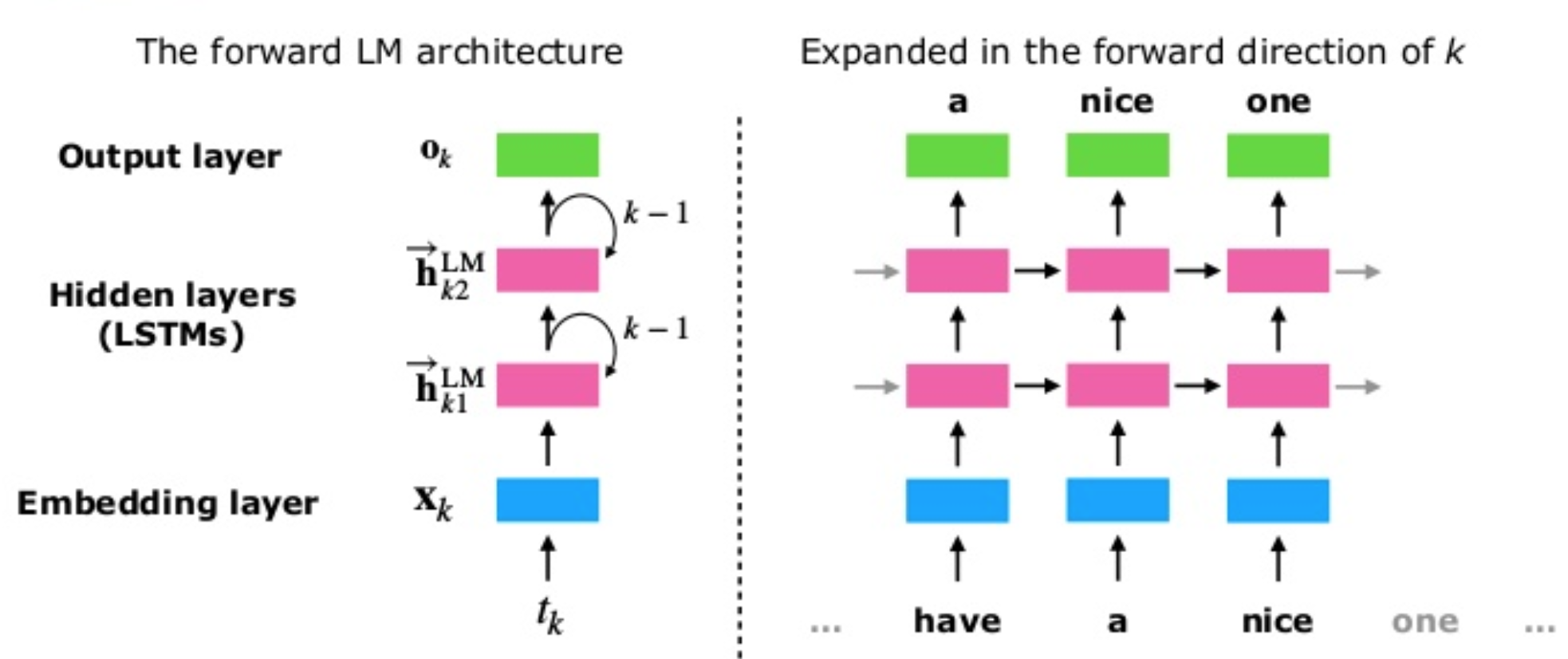

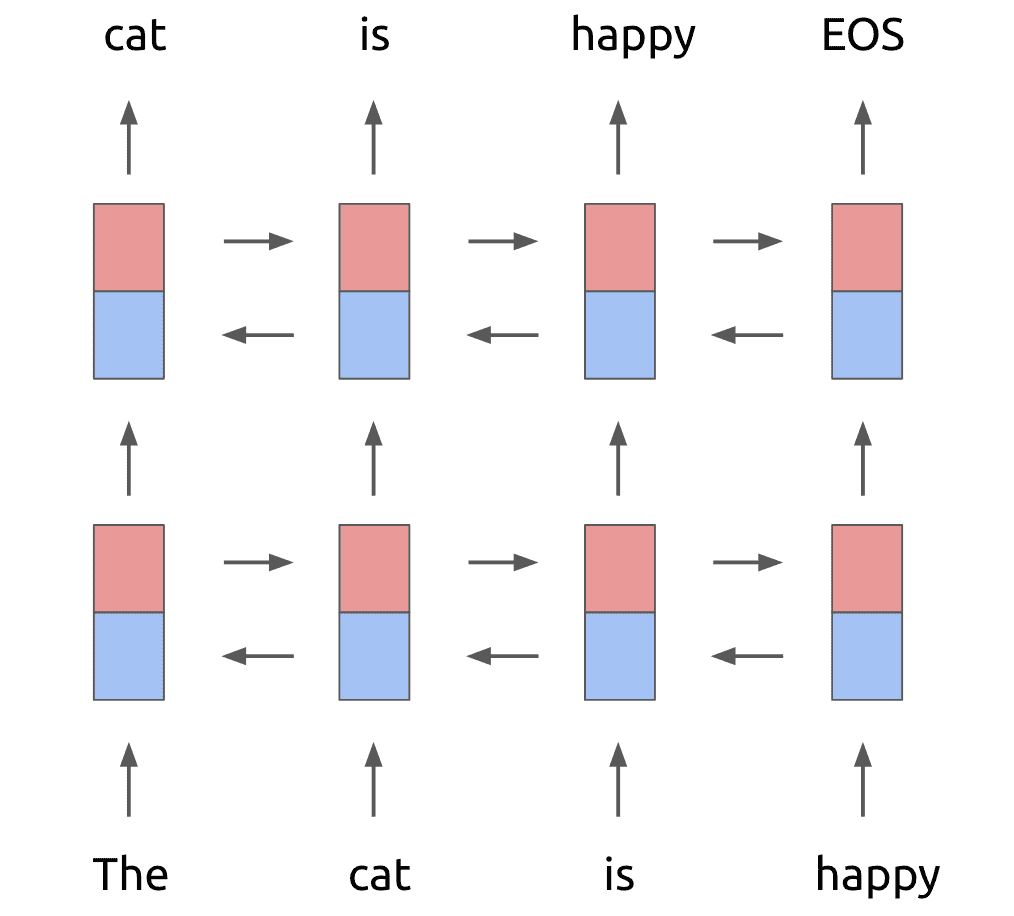

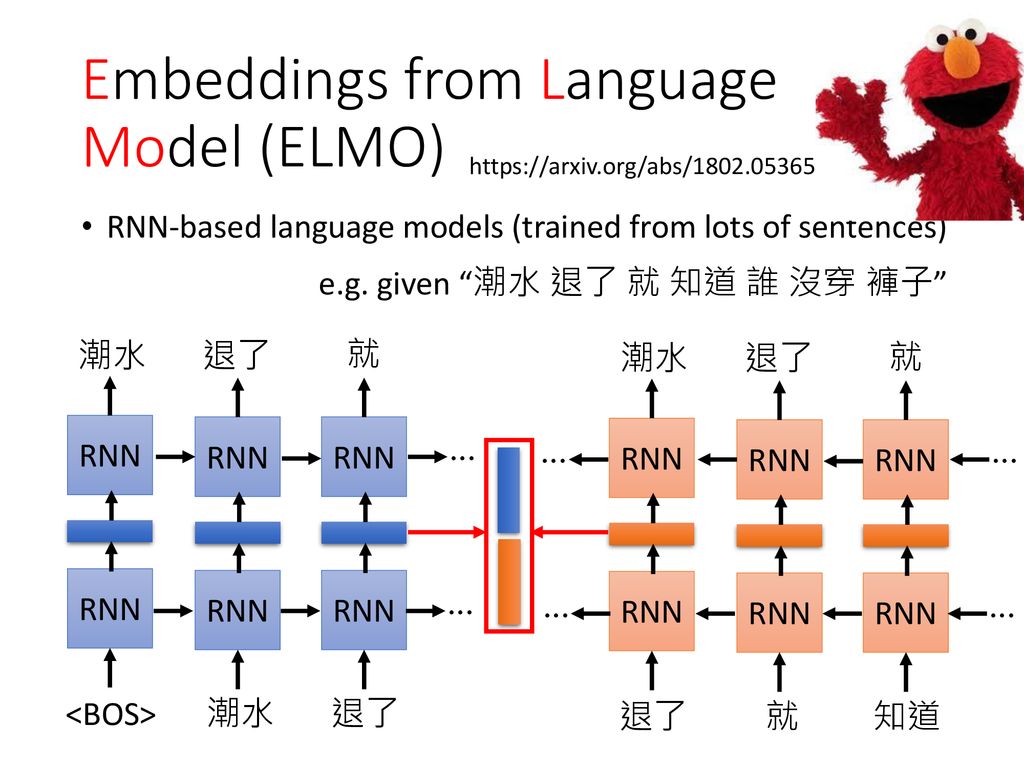

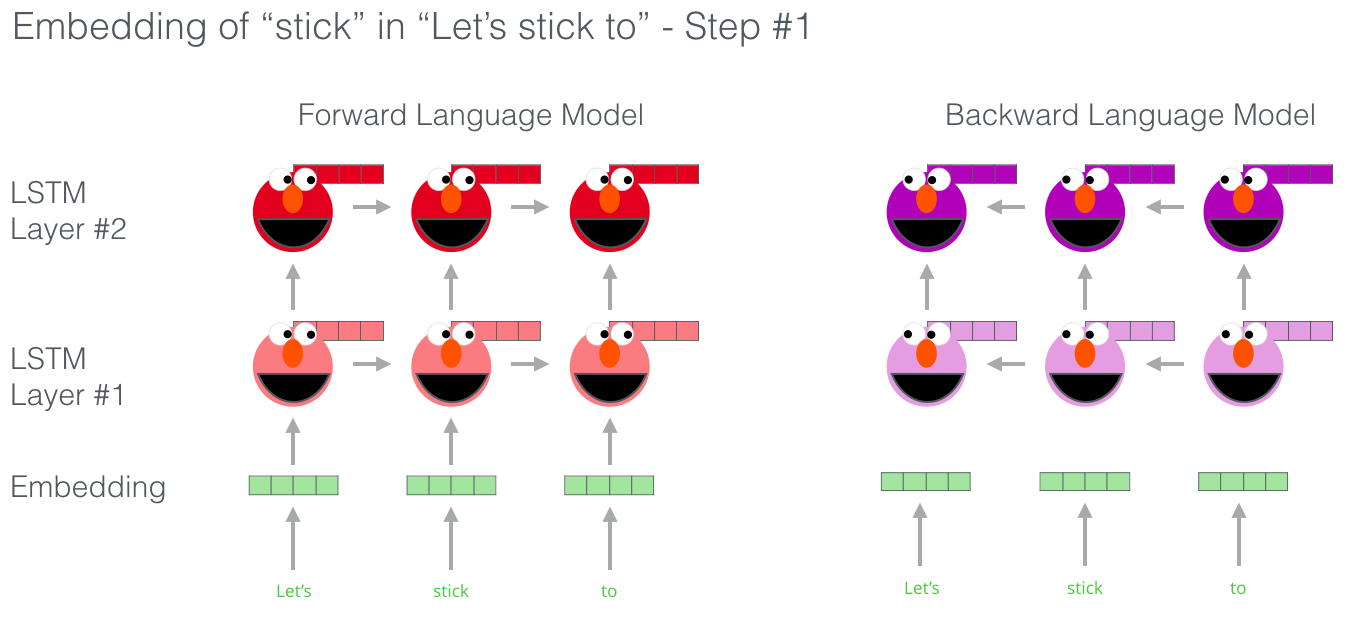

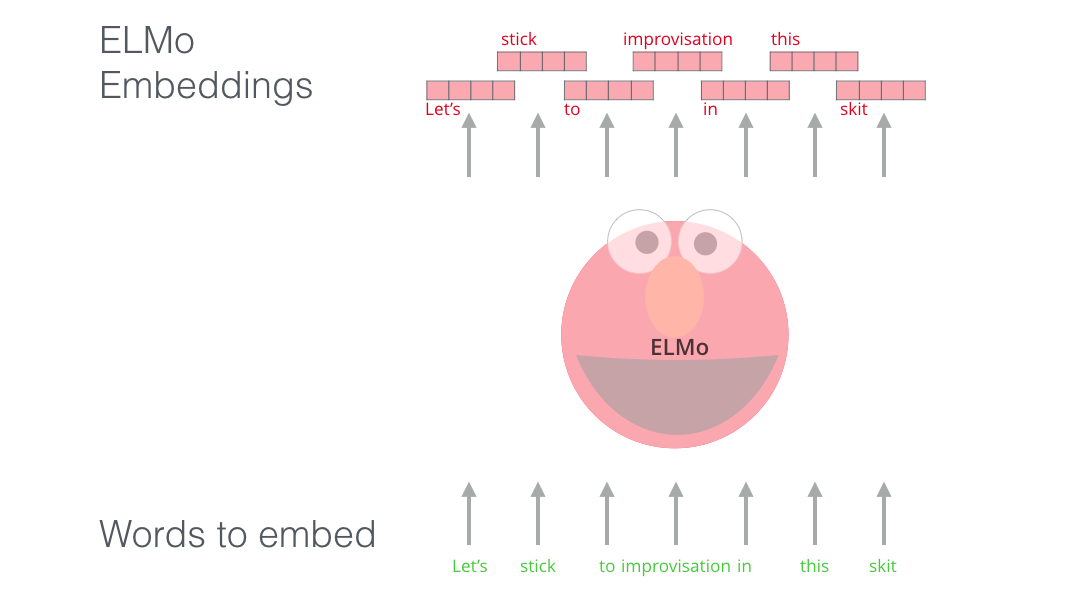

The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning) – Jay Alammar – Visualizing machine learning one concept at a time.

Learn how to build powerful contextual word embeddings with ELMo | by Karan Purohit | Saarthi.ai | Medium